AsyncSLA: Towards a Service Level Agreement for Asynchronous Services

A quality model and a DSL to model Service-Level Agreements for asynchronous services. Built on top of the AsyncAPI specification and with tool support available

A quality model and a DSL to model Service-Level Agreements for asynchronous services. Built on top of the AsyncAPI specification and with tool support available

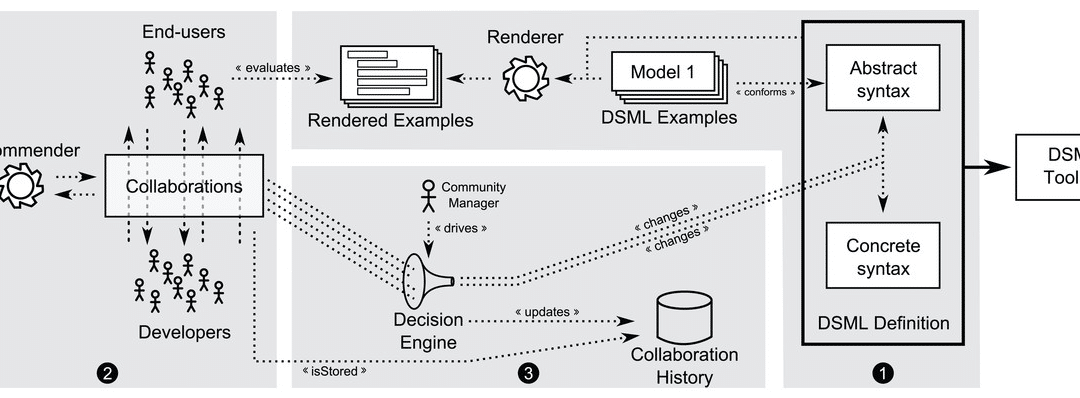

We propose to make the development process for DSLs community-aware, meaning that all stakeholders have the chance to participate, discuss and comment during the DSL definition phase

Access-control is a key element to manage security in any user interface. This is the first attempt to extend conversational user interfaces with access-control capabilities.

We tried to become rich by creating a model-driven chatbot solution. See what happened next.

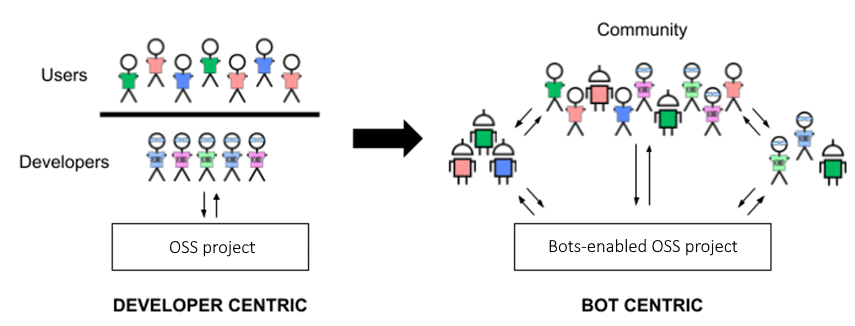

Easily design and deploy community bots to help you manage your open source projects

Recent Comments