Views on models: a survey of existing approaches

A detailed comparison of all methods and tools to create views on your models so that you can choose the one that works best for you

A detailed comparison of all methods and tools to create views on your models so that you can choose the one that works best for you

Code-generation from UML models to a number of SQL and NoSQL platforms. Including the possibility of running OCL queries on top of this combination of platforms.

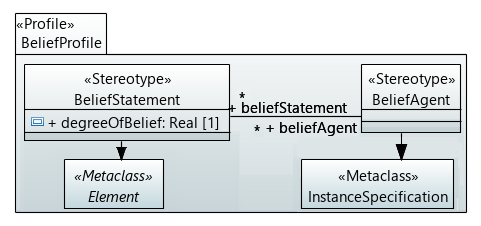

We propose to assign a degree of belief to model statements, which is expressed by a probability (called credence, in statistical terms) that represents a quantification of such a subjective degree of belief. We discuss how it can be represented using current modeling notations, and how to operate with it in order to make informed decisions.

A common problem when modeling software systems is the lack of support to specify how to enforce privacy concerns in data models. In this post, we propose a profile to define and enforce privacy concerns in UML class diagrams. Models annotated with our profile can be used in model-driven methodologies to generate privacy-aware applications.

Details of our UML Profile to model OData Web APIs. Once you have your UML model annotated with OData stereotypes you could automate the generation of your OData definition files.

Recent Comments