Eric Umuhoza explains his work on mobile IFML, an extension of IFML focusing on mobile devices (to be presented at the MobiWIS conference). Enter Eric.

Front-end design is a more complex task in mobile applications due mainly to: (1) the smallness of the screens of mobile devices. This constraint requires an extra effort in interaction design at the purpose of exploiting at the best the limited space available; (2) Mobile apps interact with other software and hardware features installed on the device they are running on; and (3) the user interaction which is basically done by performing precise gestures on the screen or by interacting with other sensors. These interactions often depend on the device, the operating system and the application itself.

Some approaches (UsiXML, IFML, etc.) apply model based approaches for multi-device user interface development. However, none of them specifically address the needs of mobile applications development. Therefore, in mobile applications, front-end development continues to be a costly and inefficient process, where manual coding is the predominant development approach, reuse of design artifacts is low, and cross-platform portability remains difficult.

The availability of a platform-independent user interaction modeling language can bring several benefits to the development process of mobile application front-ends, as it improves the development process, by fostering the separation of concerns in the user interaction design, thus granting the maximum efficiency to all the different developer roles; it enables the communication of interface and interaction design to non-technical stakeholders, permitting early validation of requirements.

This paper exploits the extensibility of the OMG standard called Interaction Flow Modeling Language (IFML) and proposes its extension tailored to mobile applications.

IFML has been chosen mainly because:

- It is an OMG standard for interaction flow modeling;

- The composition of mobile apps interface can be expressed with the core IFML concepts of ViewContainers and ViewComponents;

- It is extensible; and

- We are already familiar off.

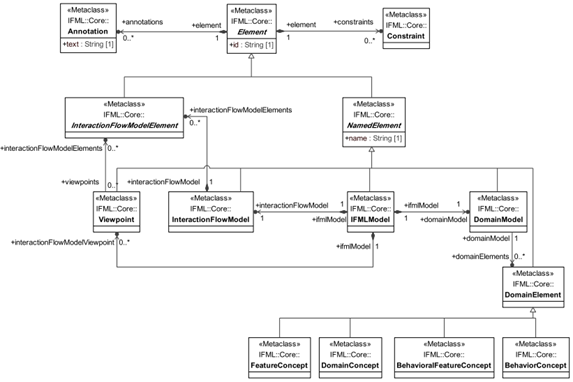

IFML is designed for expressing the content, user interaction and control behavior of the front-end of software applications. Its metamodel uses the basic data types from the UML metamodel, specializes a number of UML metaclasses as the basis for IFML metaclasses, and presumes that the IFML DomainModel is represented in UML. The high level description of the IFML metamodel is structured into the following areas of concern: IFMLModel that represents an IFML model, InteractionFlowModel, InteractionFlowModelElement, DomainModel and NamedElement.

The mobile extensions we’re proposing are basically grouped in the following three categories:

- View Containers and View Components.

Even though the composition of mobile apps interface can be expressed with the core IFML concepts of ViewContainers and ViewComponents some concepts may be extended to better reflect the terminology and properties of mobile apps. This category comprises the concept of Screen defined as a top container of a mobile app and the concept of MobileComponent representing the particular mobile view components such as tickers, buttons, and etc.

- Mobile Context.

The Context is a runtime aspect of the system that determines how the user interface should be configured and the content that it may display.It assumes a particular relevance in mobile apps, which must exploit all the available information to deliver the most efficient interface.

- Mobile Events.

The mobile events proposed in this paper are grouped in:

Events generated by the interaction of the user such as LongPress, swipe, etc.;

Events triggered by the mobile device features such as sensors, battery, etc.; and

Events triggered by user actions related to the device components such as taking a photo, recording a video or using the microphone.

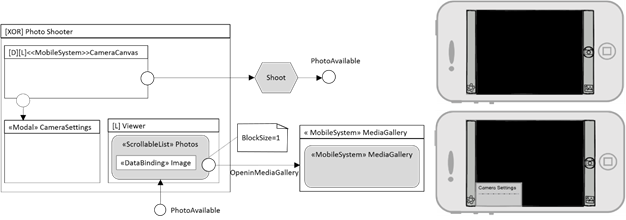

The next figure shows a simple of mobile IFML model corresponding to photo shooting. The “PhotoShooter” screen comprises a mobile system ViewContainer “CameraCanvas”, which denotes the image visualization view of the camera; from it a mobile user event opens a modal screen for setting the camera parameters and another mobile user event triggers a mobile action shoot. When the image becomes available, the internal viewer of the application is activated, from which an event permits the user to open the photo in the mobile system media gallery. The internal viewer is modeled as a scrollable list, with block size = 1, to show one image at a time.

Example of a mobile IFML model

For further details on our current work on mobile IFML see the full paper and the Automobile project website

FNR Pearl Chair. Head of the Software Engineering RDI Unit at LIST. Affiliate Professor at University of Luxembourg. More about me.

Recent Comments