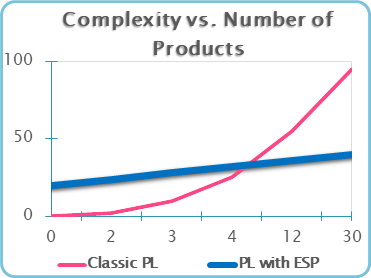

Systems always get bigger and fatter over time i.e. systems will never get to be simpler than today. System evolution is hard to manage unless done systematically using an appropriate methodology i.e. processes, methods and tool support. For a Product Line, PL, this is of utmost importance since an increase in the number of features / products leads to an exponential rise in system complexity, see Figure 1.

Figure 1: Complexity vs. Number of Features/Products

In the past, we have seen at least two anti-patterns related to the way product lines are developed:

- Clone-and-Own: copies existing products and individually evolves products in isolation

- One-Size-fits-All: builds all PL features in a single product to deliver various features as a preconfigured product

Both approaches exponentially increase system complexity with the growing number of products.

Our solution, Evolving Systems Paradigm (ESP) delivers a methodology to solve this problem, increasing longevity of a business domain to match its market needs.

Evolving Systems Paradigm in a nutshell

ESP is the PL methodology to directly contain the risk of the “exponential rise in complexity” for rapidly evolving PLs.

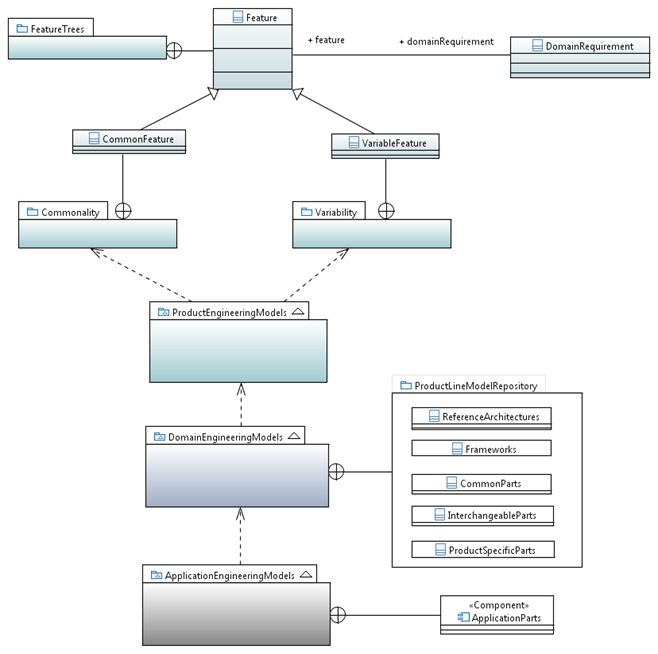

ESP organises PL development activities around Product, Domain and Application Engineering teams, see Figure 2.

- Product Engineering approaches the problem by analysing the PL needs. It identifies features and organises them into Feature Trees to enhance communication with clients. Furthermore, Feature Models are elaborated with a separation into common and variable features within the scope of the PL. PL requirements are elicited, formally defined and related to identified features.

- Domain Engineering defines logical concepts and domain elements such as various kinds of Parts needed to fulfil the requirements. Parts are mapped to features. Reference architectures and frameworks are identified to match domain needs and used to accommodate Parts and their interactions.

- Application Engineering provides physical components used to build each PL product. It identifies various technologies e.g. embedded real-time tooling including implementation languages, compilers, hardware and deployment environments.

Figure 2: ESP views PL development as a set of Product, Domain and Application Engineering activities

Parts as a building unit for Product Lines

ESP is based on three major types of entities used to build all products of a PL. Let’s take a closer look into the content of the products and see what they are made of. We discover entities that may be identical, similar or unique in functionality:

- Initially, we notice identical entities scattered throughout each of the products. We identify and factor out all these entities away from the products and store a single instance of such entities into a PL Model Repository, PLMR. We name common entities: Core Parts, CPs.

- Next, we notice variants of entities repeating themselves in products. We identify all variants of a single entity. We collect and remove all variants away from the products and store a single entity instance in the PLMR. We name variable entities: Interchangeable Parts, IPs.

- Finally, what remains are product specific entities directly contributing to the uniqueness of the product.

We store an instance of each entity into the PLMR. We name unique entities: Product specific Parts, PsPs.

Variability and Binding Specifications

ESP enables Domain and Application engineers to separate out Part variability into domain and application variability specifications. Each variability dimension is expressed as a set of instructions including any required static and dynamic models needed to transform an IP into its Physical Parts[1] i.e. original part variants.

Binding specifications define how to wire Physical Parts into architectures.

Each Part type is annotated with its variability and binding specifications.

[1] Physical Parts are Part Variants and shall not be confused with physical components used for deployment e.g. jars, exes, libs etc.

Using Parts, Variability and Binding Specifications

ESP process defines the two following steps:

- During analysis, we factor out variant parts into IPs and store IPs and related specifications including CPs and PSPs into the PLMR to enable their reuse

- Populating the PL with products built using entities from the Model Repository:

- Product creation starts at Variability Resolution Time when variability specifications are executed on IPs to transform Logical IPs into corresponding Physical Parts

- Product composition continues at Binding Time to execute binding specifications on Physical Parts to bind them into larger structures.

How it works

ESP is an invasive software composition technology. Parts are executable UML models: static & dynamic, formal & complete. Model transformations inject parts into the PL architecture to obtain products

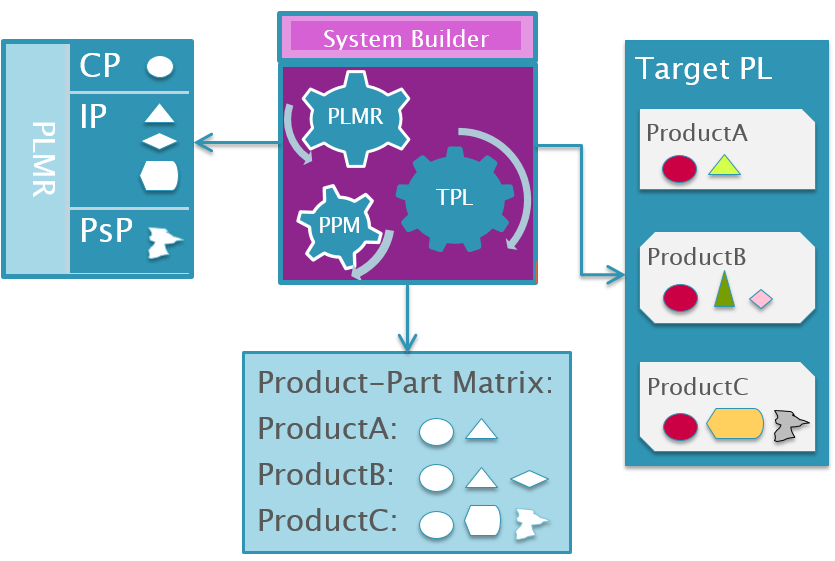

The process of populating the target PL is given in Figure 3. Each product is a unique composition of Physical Parts composed into a specific Product Architecture. Product-Part Matrix specifies each product as a composition of CPs, IPs, PsPs and respective Binding and Variability Specifications. System Builder uses parts from the PLMR and the Product-Part Matrix to build complete product lines.

Variability and Binding specifications are expressed using stereotypes defined in the ESP UML profile. Initially, the UML profile is applied and stereotypes allocated to corresponding entities. Next, stereotypes are materialised resulting in fully-blown Physical Parts, variants. Finally, Physical Parts are interconnected using interfaces and supplementary model elements into a fully functional product. Each intermediate model is persisted for debugging purposes. The UML profile is retracted, thus enabling processing of the next part.

Build and deploy

The output of the composition process is a PL baseline comprising executable product models. Several types of product models are created during the population of the Target PL:

- Product Domain Executable UML Models

- Product Implementation Models

- Product Build System Models

Product Domain Executable models are compiled by the model compiler into Implementation Models. Product Implementation Models are compiled by the Product Build System to obtain the product executables. Out-of-the-box implementation models are available for C, C++ and Java programming languages. Additional programming languages are available on request.

Product implementation models are promptly compiled, debugged and tested. The System Builder builds a single product as a composition of 7 +/- 2 IPs within 60 seconds, thus making the modify-compile-test cycles a programmer’s reality.

Benefits of the Evolving Systems Paradigm

ESP methodology is non-disruptive i.e. it is iterative, incremental and embraces legacy implementations. Existing systems are refactored, organised into parts with which the PLMR is populated. Legacy implementations are easily reused by creation of legacy interface models. Parts are bound to legacy interface models with corresponding Binding Specifications.

Each part in the PLMR is additionally equipped with following information:

- Test Drivers and Unit Tests modules per Physical Part

- Traceability to Key Features, Use Cases, User, Domain and Application Requirements, Technical System Requirements including Integration, Verification and Validation test cases, Deployment Plans and Scripts

- Complete implementation model and respective documentation set is generated for the product

- Automated collection and presentation of Test Results

When the PLMR is populated with Parts, ESP may be used to show that the concrete PL products may be regenerated from the models. Once proof has been obtained, we use the PLMR, instead of the concrete products, to evolve the PL completely covering the needs of the business domain.

Green field PL projects are expected to reap all benefits from the very first product deployment, enabling unprecedented product line longevity and supporting the business domain to deliver its full response to its market needs.

Milan has consulted the Swiss, European, American and Chinese industries for the past 30 years where he gained valuable experience in both, industrial and financial IT sectors. Applying a pragmatic approach to software development ensures that project deadlines are met, proposed budget is sufficient to complete the defined tasks and all promised functionality is delivered.

He believes that rationally programmed machines e.g. those using formal and complete models, can free humans from executing repetitive engineering tasks and exploits this fact to provide unmatched quality solutions.

The motto he uses persistently:

“Never let a Human/Machine do a Machine/Human Job”

System evolution is hard to manage unless done systematically using an appropriate methodology i.e. processes, methods and tool support.

There is no substantiation for this claim. Our research in the 1990s back at AT&T found that exactly the opposite was true. The documented, “shared” processes earned the organization a high mark on ISO audits. But our empirical research showed no correlation between actual practice and the documented process. Indeed, the people were looking to machines to orchestrate and automate essentially human problem. Minds, not machines, deal with complexity. Elon Musk has recently been articulate about this. The Japanese have long known it. Toyota has been replacing machines with people these past few years — because machines don’t learn (https://qz.com/196200/toyota-is-becoming-more-efficient-by-replacing-robots-with-humans/)

I found, studied, and documented an early counterexample, which was the Borland QPW team. Their quality and productivity were orders of magnitude beyond what a controlled process offered. And the architecture proved itself to be maintainable. See: James O. Coplien and Jon Erickson. Examining the Software Development Process. Dr. Dobb’s Journal of Software Tools, 19(11):88-95, October 1994.

Gabriel would further reflect on these findings in his “Patterns of Software” book.

I am arguing neither against domain analysis, nor product lines, nor against architecture: only the claims for systematization and tools. The problem is an intellectual and corporate organization problem rather than one that process and tools can fix. I offer this as a statement backed by research, evidence, and numbers. For a more balanced and practical view — again, substantiated by research and experience — see the Lean Architecture book.

As for the rest, anything follows from a fallacy. I’m happy to take this discussion further, but I do hope you’ll be able to produce some evidence of the claims.

Thanks for this everlasting question. I’ll try to answer it here and bring more light to ESP.

~

1st: Prologue: of Humans and Machines

My favourite motto is: “Never let a Human/Machine do a Machine/Human Job”

I completely agree humans are much better at mind jobs i.e. machines cannot do human mind jobs properly – but they can help and that is why we use them.

~

2nd: of Pain and Solutions

In the beginning – there are problems. These may hurt you, bring sleepless nights but in any case they will make you think. You will think and it will hurt even more since you can find no solution. Pain stops when you do – a solution is born.

~

3rd: what is ESP really meant to do?

ESP does not attempt to systemise “processing of pain” – this is definitely the mind part. It attemps to organise percieved domain solutions aiming at 100% reuse. Since solutions contain a vast amount of information, let’s use machines to help.

~

4th: How does ESP work?

Viewpoint definition:

Imagine if you wanted to visualise your own personal evolution all the way back from birth. The viewpoint used does not define pain driving the evolution. The viewpoint defines elements of pain solutions in terms of structure and behaviour.

Now imagine, if this viewpoint also defined a time potentimeter (knob with year marks) and let you turn it left and right. What would you see?

You could visualise your own evolution as described by modifications to CPs, IPs and PsPs. You clearly have a point in time before and one after the pain is resolved. You can reason about what happend, when it happened, how it affects structure and behaviour. You are now able to gain deep insight into your domain.

Evolution is a graph of transformable parts with a time dimension.

ESP organises timed domain solutions into graphs with nodes containing strictly incremental structural and behavioural specifications, if any (none for leaf nodes).

It is our chessboard against the tooth of time, to enable humans to foresee events and reduce risks.

5th: putting it all togather

In order to make this viewpoint understandable it needs a context. It is this context that I call the ESP methodology. I do not describe a sturdy control mechanism but rather a series of steps (what needs to be done with percieved / obtained solutions) that enable the individual to apply the underlying tool to achieve timely results.

Systems will fail when humans do not have timely solutions to manage change.

6th: self-critical reflection

I agree ESP may not hold for organic, living mechanisms or stellar bodies that are not made (yet) of ESP-like parts. On the other hand, many evolving mechanism in the industry lend themselves be described via Parts very elegantly.

~

Epilogue:

I hope I could shed some more light on the topic. The better I can partition complex mechnisms, the more parts I will have and then I can try to make more sense of it all. Myself, I tend to call ESP more a Paradigm and less a Methodology yet there are processes, methods and a tool at it.

Here are some definitions to clarify the above:

A View is a representation of an architecture. Architecture Views may be graphical, tabular, textual or any combination of these (as an architecuture ‘product’).

A Viewpoint is the perspective taken – for example, an End User will have a different Viewpoint than a CIO or business manager. In other words, an architecture is represented in a View that is appropriate to the audience’s Viewpoint.

OMG 2010

A viewpoint is a specification of the conventions and rules for constructing and using a view for the purpose of addressing a set of stakeholder concerns

Hi James,

“…Minds, not machines, deal with complexity…”

I practically experienced both worlds in software production, and suffered myself from early fantasies of controllability. But I disagree with the above quote as an absolute one. For sure there are certain aspects of complexity that humans are (still, and probably for some time to come) better with than machines. But it is likewise true that concepts, abstractions, and machines help us to manage the beast. As humans we manage complexity among other things by inventing things.

Looking at such kinds of inventions that already exist and do such work to an immense degree – operating systems, the web… – I do not understand why we shouldn’t strive for further improvements. My understanding of Toyotas approach is exactly that: to continue to improve, questioning all our assumptions regularly. It was right to do that in the 90ies for the old methods, in my opinion it is right to question what we take for granted today.

Kind regards

Andreas

Hi Milan,

thank you for sharing this interesting description of your ESP approach, it sounds like this required quite an amount of thought and work.

Concerning this step:

“…variability specifications are executed on IPs to transform Logical IPs into corresponding Physical Parts…”

“…stereotypes are materialised resulting in fully-blown Physical Parts, variants…”

What exactly is happening here? Is this basically a model to model projection/transformation/augmentation/…? Moreover, I’d be interested how you manage relations between Parts in general. Is there, e.g. some inheriance relation between an IP and a PP? If not, why not?

I like the list below “Each part … equipped with following information”, such equipment can be quite valuable.

Kind regards

Andreas

Hi Andreas,

Thanks for your questions which will allow me to explain the internals of ESP.

Since my replies are voluminous, I’ll reply to each question with a single comment.

>Andreas: it sounds like this required quite an amount of thought and work

>Milan: all in all it took me 4-5 person-years to get where I am today.

Thanks and regards

Milan

Hi Andreas,

>Andreas: “…variability specifications are executed on IPs to transform Logical IPs into corresponding Physical Parts…”

“…stereotypes are materialised resulting in fully-blown Physical Parts, variants…”

What exactly is happening here?

>Milan:

-Let’s imagine that the brilliant PL architect has a task to evolve IP1 only for ProductC of the PL.

Preconditions:

-Model repository already contains executable UML models for IP1, its variability and binding specifications that now need to be modified

Architect’s Activities:

-The architect elaborates a solution, see my answer to James, e.g. based on a new inheritance relationship where one of the IP1 classes, e.g. class Propulsor,

is modelled to inherit from a superclass GraphableObject to evolve additional functionality i.e. make the Propulsor viewable in a graph.

-The architect defines the new superclass GraphableObject in the extended Variability Model within the Model Repository.

-The architect defines an “Inheritance” stereotype within the Domain Variability Specification (constraining the application of this stereotype only in case of ProductC):

NanoTechnology::NanoTechnology::BusinessModel::Propulsor

AddInheritance

Class

SuperClassName

GraphableObject

ESP does the following:

1. SystemBuilder starts building ProductC and applies the ESP profile to the currently loaded model

2. SystemBuilder opens up the Domain Variability Specification for IP and applies all specified stereotypes e.g. stereotype “Inheritance” to class Propulsor

3. The intermediate model is persisted for debugging purposes

4. SystemBuilder iterates over all ESP stereotypes a materialises them to create Physical Parts i.e. IP Variants:

– a new generalisation relationship is inserted into the model from superclass GraphableObject to subclass Propulsor

5. The ESP profile is retracted

6. The model is persisted

7. SystemBuilder is ready to process the next IP by going to step 1.

Thanks and regards

Milan

Hi Andreas,

>Andreas: Is this basically a model to model projection/transformation/augmentation/…?

>Milan: Variability Resolution is basically a model transformation of 3 input models into a single output model:

1. the input IP model,

2. the input Domain Variability model

3. the input Binding model

The output model is an augmented model known as the Physical Part or Variant.

Thanks and regards

Milan

Hi Andreas,

>Andreas: Moreover, I’d be interested how you manage relations between Parts in general.

>Milan: Each Part has an interface used for interacting with the Part. Communcation may synchronous or asynchronous.

Binding Specifications define interactions between Parts using the UML Action Language to make the resulting UML model fully executable:

1. In the beginning, typically, there is a factory method “initialization” to start up the product by creating objects and initialising them.

The set of sterotypes describing a fragment of initialization is given below using an Action Language to populate behaviour:

NanoTechnology::NanoTechnology::DomainServices::Domain Support::initialization

AddAction

Operation

actionReference

Ref<GraphController> gc = CREATE GraphController();

actionText

gc.initialize();

palFilename

NanoTechnology.pal

position

StartOfBody

Actions may be added to the StartOfBody or EndOfBody of an operation.

Thanks and regards

Milan

Hi Andreas,

>Andreas: Is there, e.g. some inheriance relation between an IP and a PP? If not, why not?

>Milan: Only the architect’s solution dictates how to augment the IP into the PP, whereby, ESP is only a vehicle to execute architect’s activities in his absence i.e. PL population time. Therefore, there is no single direct relationship between IP and PP imposed by ESP.

The architect may use any UML element from the Eclipse Papyrus UML Toolbar e.g. inheritance, association etc. to evolve IPs.

The Domain Variability and Binding Specifications are a “record” of architect’s activities that are needed to evolve the IP into the PP.

-Without ESP, the architect’s solution would be implemeted with his manual activities i.e. mouse point and click, drag and drop, and typing of action language constructs.

-With ESP, his manual activities are translated into stereotypes using ESP UML profile.

Both Specifications are thus translated into ESP stereotypes and executed at PL population time to build the concrete products.

In a way, ESP serves as a kind of sophisticated, programmatic, record-playback device to the architect to build solutions by letting him code his steps into stereoetypes and and then executing his solution steps to create new structure and behaviour.

Thanks and Regards

Milan

Hi Andreas,

ESP Model Repository defines any PL element only once. Reuse is enabled by specifications operating on PL elements, therefore, achieving nearly 100% reuse is possible.

Thanks and regards

Milan

Hi Andreas,

On human minds and machines:

In rhetoric, paradigma is known as a type of proof. The purpose of paradigma is to provide an audience with an illustration of similar occurrences. This illustration is not meant to take the audience to a conclusion, however it is used to help guide them there.

Since ESP executes architect’s solution steps to obtain the final solution programmatically – the solution matches fully the authentic architect’s solution.

QED

Thanks and regards

Milan